Swarm vs Micro vs Nano

Destination-less Mobility — Driving Without Arrival

We decide where to go, and the vehicle performs the physical task of getting us there.

Why Strategy Must Be Designed Around Scenarios, Not Predictions

Strategy has long been associated with prediction.

Forecasting trends.

Estimating probabilities.

Choosing the most likely future and preparing for it.

Why Information Is Not Delivered, but Interpreted

Information is often treated as something that moves from one place to another.

A message is sent.

A signal is received.

Meaning is assumed to transfer intact.

Why AI-Native Organizations Must Be Designed Around Roles, Not Tools

When organizations talk about becoming “AI-native,” the conversation almost always begins with tools.

Which model to use.

Which platform to adopt.

Which workflow to automate.

Why Technology Ethics Is About Designing Responsibility Structures, Not Rules

When technology ethics is discussed, it is often framed as a list.

Things a system should not do.

Boundaries that must not be crossed.

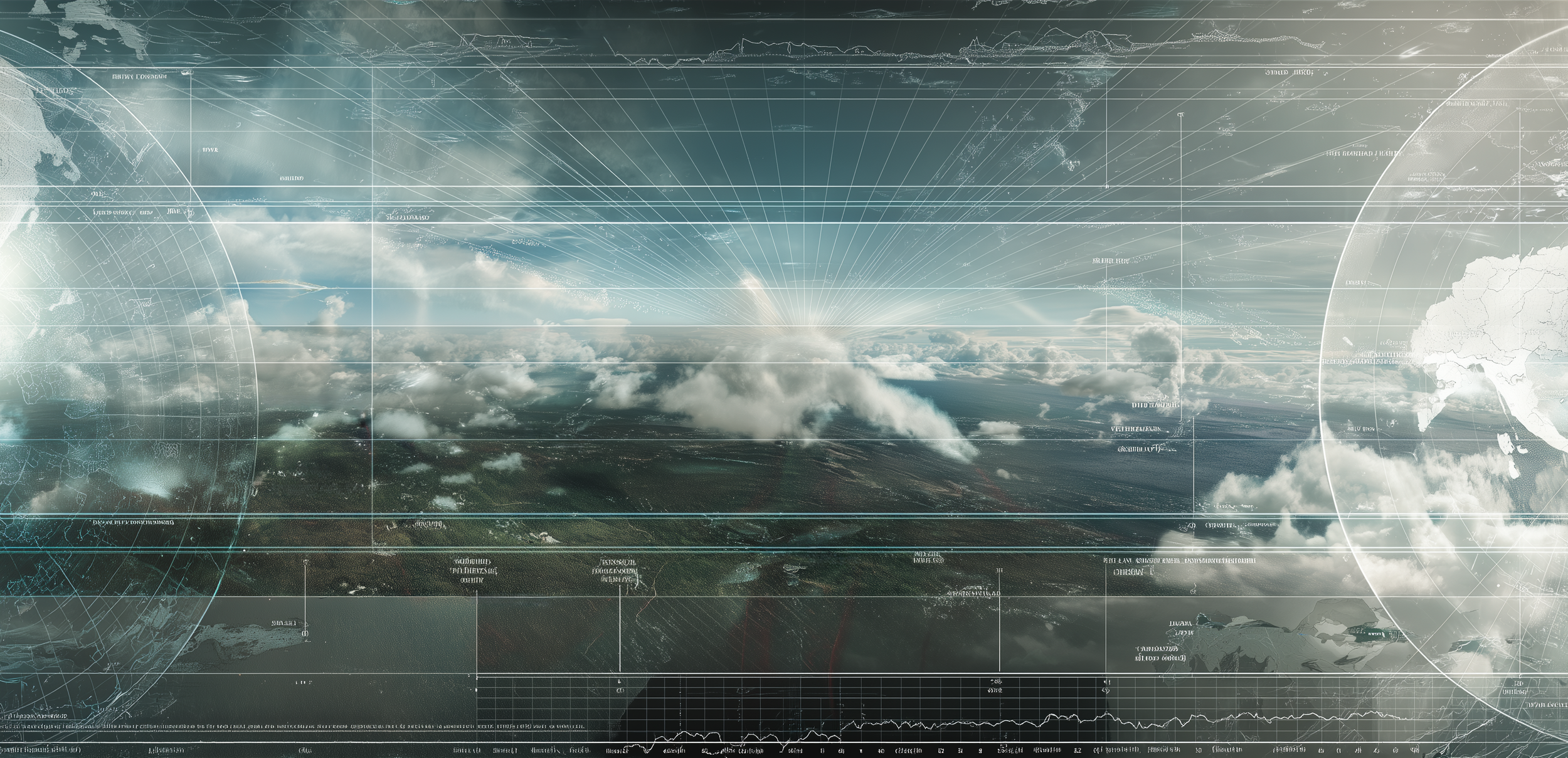

Why Autonomous Systems Must Be Designed Around Judgment and Stopping, Not Just Movement

Autonomous systems are often discussed in terms of motion.

How smoothly they move.

How fast they react.

How efficiently they navigate space.

Why UX in High-Risk Environments Is About Reducing Misinterpretation, Not Convenience

In consumer products, UX is often associated with ease.

Faster flows, fewer steps, intuitive interactions.

In high-risk industrial environments, that assumption breaks down.

Why Generative AI Is Not a Tool for Producing Answers, but for Designing Judgment

Generative AI often feels intelligent.

It speaks fluently, produces convincing outputs, and responds with confidence.

In many cases, it appears to know what it is doing.

Why Modern Warfare Should Be Understood as an “Operational Structure,” Not a Weapon

For a long time, warfare has been explained through the lens of weapons.

Range, accuracy, destructive power, speed.

Combat capability was reduced to specifications, and strategy became a question of acquiring more powerful tools.